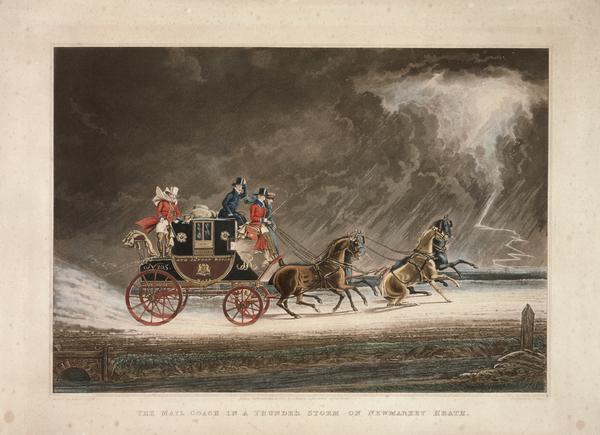

Ooooh, burny…

My fireplace in winter.

My fireplace in winter.

It’s nice to sit and tapper away on the Underwood in front of a big blazing inferno

Fire: Primal. Essential. The key to human survival. Used to describe everything from boiling passion and flaming love, to burning hatred and searing vengeance. What is the history of fire? How has it shaped the world? And how has the world shaped fire? Let’s find out together.

The Essence of Fire

There are innumerable milestones in the history of mankind, from walking upright, to using tools, to hunting, gathering, farming and the waging of war. But few inventions in history are as important as the creation, understanding, and use of fire. For thousands of years, fire was an essential to life. It heated homes, it gave us light, it cooked our meals, and gave us warmth and protection. Without fire, human migration and settlement would’ve been next to impossible. And human progress and creativity would’ve been greatly hindered. This posting will look at man’s use of fire, as well as the advancements of fire technologies and tools.

The Three Elements

A fire requires three things to burn:

Air. A fire cannot burn without sufficient oxygen.

Fuel. A fire cannot last without additional fuel to keep it going as it consumes its current supply and turns it to ash.

Heat. A fire does not burn and does not last without heat to get it going, and to keep it going.

It was early man’s understanding of these three components of fire that allowed him to use and control fire. Control it for heat, light and cooking. And control is vitally important – improperly used, fire can destroy as much as it can delight. But how do you get a fire?

To start a fire, you first need fuel. Small fuel at first – Tinder. Tinder is anything small, dry and extremely combustible. Cotton-wool, old thread, shredded cloth, dry straw, moss, grass and finely torn paper will all suffice for tinder.

On top of tinder, you require kindling, which is small pieces of wood to encourage the fire to burn and grow. Kindling wood needs to be small and dry – branches, off-cuts of planks, scrapwood, bark, etc, will all suffice.

On top of kindling, you require fuel-wood. Fuel-wood or firewood, are the larger logs, or segments of logs, which you load on top of the kindling once it’s burning sufficiently. As with the others, it needs to be dry. Start with small pieces of fuel-wood first (like thick branches) and then work your way up to larger logs or branches.

There are a million and one methods of building fires – Upside down fires, Teepee fires, log-cabin fires…the methods are endless – and so are the arguments for each one and why A is better than B. So I won’t cover that. Everyone has their own method that works for them.

But how do you LIGHT a fire? This, for centuries, was one of the hardest things to do…

Lighting a Fire

You have your kindling, tinder and firewood. Now you just need it to burn. A fire won’t burn without heat to get it going. To get heat, you need a concentration of energy. Before the advent of matches in the 1800s, fire-lighting was a laborious and at times fiddly task, and was achieved in one of two ways: Concentration of light-energy, and concentration of friction-energy.

Ever stolen grandpa’s magnifying glass and used it to burn ants? That’s starting a fire through concentrating light-energy. Specifically, concentrating the rays of the sun until they are focused on one spot for long enough that the intense heat generated causes your tinder to catch fire through solar energy!

The other method of creating fire, if the sun was not available, was to use friction. This is much more unpredictable and requires quite a bit of skill and patience, but it does work.

One of the most common ways of lighting a fire through friction was through the use of the bow-drill:

A piece of wood with a hole in it is placed on the ground over a piece of kindling-wood (the top piece of wood is used to provide stability). In the hole, a piece of tinder is placed. A wooden stake (the ‘drill’) is placed over the tinder. The bowstring is then looped around the drill, and the bow is drawn rapidly back and forth and up and down the drill.

Driving the drill back and forth at high speed over the tinder creates friction, which creates heat. At 300 degrees Fahrenheit a spark is generated from the friction, which catches the tinder. Once the tinder is lit, the bow, the drill, and the top piece of wood are removed, and the tinder is fed with kindling to start a fire.

Placing the Fire

Gathering tinder, kindling and fuel-wood for a fire and drying it out was relatively easy. So was starting a fire, given the right tools and sufficient practice. The next thing for early man to conquer was the placement of a fire.

Fires had to be built and lit with careful consideration. Failure to light a fire in a safe place could result in catastrophic, uncontrollable infernos that could destroy grasslands, forests and settlements.

Controlling Fire

The first fires were simply built and lit inside ‘firepits’. A fire-pit was an area of land cleared of grass and wood, where a hole was dug. The hole had stones placed around it to create a fire-ring and hearth, and then the fire was simply built inside the ring and let to burn. And for centuries, this was the main method of fire-control and placement.

Having an open fire in the middle of your house or room or hut or cottage or cave had its advantages and disadvantages. First – the heat was all over the place – Lovely!

The problem was…so was the smoke! Although fireplace smoke can smell beautiful and tangy (which is why we love smoked foods and wood-fired pizzas so much), uncontrolled smoke could be deadly to the people around the fireplace.

To control the smoke, or to clear it out of the building, A simple solution was just to cut a hole in the roof of the building and let the smoke shoot up there. This worked…kinda. The smoke would leave the house through the hole in the roof…eventually. It would waft up there, not flow up there. So it took a while. And if the wind was against you, then you had real strife!

The chimney, followed by its companion, the fireplace, was invented in the 12th Century (1100s), although for a long time, they were considered features found only in wealthy homes and castles. Care had to be taken in their construction of stone, or brick, and this made them expensive. But by the 1500s and 1600s, fireplaces were slowly becoming more and more commonly found in the homes of regular people.

The Fireplace

Starting in the Medieval Period, houses of varying levels of grandeur were constructed with chimneys and fireplaces. Fireplaces were built out of stone or brick, and a typical fireplace setup involved…

The Chimney or Flue

The long stone, or brick pipe or vent which channeled the smoke up and out of the building.

The Smoke-box

The chamber at the bottom of the chimney-pipe, which acted as a buffer against downdrafts.

The Fire-Box/Fireplace

This is directly under the smoke-box, and it’s where the fire itself would be located.

The Hearth

The stone or brick platform on which the firebox and chimney is built. Sometimes extends outside of the fire-box into the room, to provide extra protection against rolling logs.

The Advantages of the Fireplace

The fireplace had numerous advantages over the everyday hole-in-the-ground fire-pit. The fire was now safely contained in its own little box, with a stone chute to carry away the smoke. A sliding or hinged shutter above the firebox, the damper, allowed you to close off the fireplace chimney in inclement weather, to prevent cold drafts and rain from coming down the chimney and into the room below. A big improvement on the hole in the roof which was a permanent opening to the weather outside!

In smaller dwellings, a fireplace was used as both a heater, and as a cooker. The fire kept the room and house warm, but also provided heat for cooking. Pots hung on hooks, or placed on trivets or stands over the coals and ashes of the fire, could hold food (usually soup or stew or some variety of pottage) which could be cooked, or kept warm over the coals and flames.

In and Around the Fireplace

As the fireplace started becoming more and more accepted and more a part of people’s homes and lives, a whole industry sprung up supplying equipment and accessories that the discerning homemaker could purchase for the fireplaces that were likely to have dotted the average home during the period from the 1600s up to the majority of the 20th century.

Andirons

Also called ‘fire-dogs’, andirons (sold in pairs) are iron (or in more expensive models, brass) stands used to support burning logs above the hearth of the fireplace, to encourage air-flow and improve a fire’s chances of burning more completely.

Brass andirons in a fireplace

Brass andirons in a fireplace

Andirons could be simple iron bars or frames, or they could be elaborate, decorative stands made of brass. Some andirons had additional bars and hooks which could be attached or removed as required, so that buckets, pans and pots could be hung over or near the fire, to allow water to boil, or to cook a simple meal.

Andirons at work, supporting a stack of burning firewood

Andirons at work, supporting a stack of burning firewood

Fireplace Grate

The grate in my fireplace

The grate in my fireplace

Invented in the 1600s, fireplace grates were a big advancement on andirons. While andirons could hold large logs and chunks of firewood, a fireplace grate could contain the entire fire, kindling, charcoal, fuel-wood and all, and keep it off the floor of the fireplace, improving airflow. Made of wrought iron which was forge-welded together, grates varied in size, from smaller, coal-burning grates, to much larger wood-burning grates, which could be several feet wide and several inches deep.

Fenders

Typically made of brass or iron, a fender is a wrap-around fire-guard placed on the hearth in front of the fire. It’s designed to prevent ash, coals or rolling logs from entering the room and creating a mess, or starting any unintentional fires.

.jpg) A brass fireplace fender. Fenders are freestanding, and they can be moved to more easily clean the fireplace between uses

A brass fireplace fender. Fenders are freestanding, and they can be moved to more easily clean the fireplace between uses

Fire-Irons

Fires were originally tended to using whatever utensils were close to hand, usually improvised. Old swords, iron bars, tree-branches and such. Eventually, pokers were created to give a person a permanent fire-tending tool. Ash-shovels, brooms and fire-tongs soon followed, and it’s these four items that typically make up the average set of fire-irons, usually stored on their own little iron or steel stand. Fire-irons are made of iron, or in more expensive sets, brass.

Fire-irons, stored on their own racks, became staples of homes around the world, and every household was likely to have at least one set. Smaller and shorter ones for coal-burning fireplaces and stoves, and larger, longer ones for wood-burning fireplaces.

Log-Cradle

Placed next to the fireplace, or directly outside the front/back door, a log-cradle (and it’s relation, the log-bin) became a necessity during very harsh winters.

When it became impossible to make the trek out to the wood-shed in the middle of the night, or when snow or rain proved too heavy, wood had to be stored near to the house. Log-cradles were designed to hold enough wood for anywhere between one night’s burning, or up to a week or more. These cradles are always held above the ground on legs, to stop moisture from gathering and allowing the wood to dry more effectively.

Dustbin

These days, a ‘dustbin’ is just another word for a rubbish bin or a garbage-bin. But in the days when wood and coal fires were a part of everyday life, a ‘dustbin’ was a separate and distinct entity. Specially made of metal with tight-fitting lids, carry-handles, and with raised bottoms, dustbins were constructed specifically for the task of holding household dust and fireplace ash and soot.

Storing ash from the fireplace in the dustbin was done usually only temporarily. When the bin was full, the ash would be dumped into the garden compost-heap. In large cities where this wasn’t possible, the dustbin was collected by the dustman in his dust-cart on a regular basis. The ash and dust in the bin was used for fertiliser out in the countryside.

Bellows

Fire was an important part of life for centuries, especially in places like the kitchen. Where-ever possible, man created instruments which improved and sped up the creation and maintenance of fire. You could continually blow on a fire, or fan it, to give it more airflow and oxygen, but blowing is exhausting, and fanning is imprecise.

Bellows are much more precise, regulated and forceful, which is why they’re preferred over other methods of giving a fire oxygen. Giving a fire oxygen like this causes faster combustion and therefore, greater heat output.

Fireplace Reflector/Fireback

It may surprise some people, but fireplaces are not especially efficient. Crackling flames and wafting plumes of smoke give the impression of great energy and heat, but actually, only a small amount of that heat and light is projected into a given room. A fireplace is only open on one side, so only a quarter of the fire’s energy is projected into the room. The rest of the heat which the fire generated is absorbed by the iron grate, the floor, the three walls of the fireplace, or else goes up the chimney.

To improve fireplace heat-efficiency, a fireback is generally recommended. A fireback is a metallic panel placed behind the grate, between the fire and the back wall of the fireplace.

Firebacks come in one of two styles: Solid cast iron panels, or reflective steel, copper or aluminium panels (this latter called fire-reflectors). They both do the same thing, but in different ways.

An iron fireback absorbs the heat from the fire, and radiates this captured heat outwards. This increases the amount of heat that the fire produces, which would otherwise be wasted by being absorbed by the brickwork on the back wall.

Antique cast iron fireback

Antique cast iron fireback

A reflective fireback or fireplace reflector works by reflecting the heat and light of the fire out into the room. This not only increases the heat output substantially, but also reflects a lot more light into the room, creating a brighter fire.

The reflector placed behind the grate in our fireplace. A homemade affair easily fashioned out of sheet-metal, a few screws and some metal bars

The reflector placed behind the grate in our fireplace. A homemade affair easily fashioned out of sheet-metal, a few screws and some metal bars

Fire Screens

The Great Fire of London Screen!

The Great Fire of London Screen!

Along with fenders, fireplace screens started being used in the 18th and 19th centuries. Originally just a way to cover up the fireplace when it was not being used (to hide the unsightly vision of burnt charcoal and ashes), modern fireplace screens (made of copper or brass) serve a double-purpose of also protecting the room from sparks, flying embers or rolling logs.

Chimney-Sweeping

Chim-chimney-chim-chimney-chim-chim-cheree,

Chim-chimney-chim-chimney-chim-chim-cheree,

a sweep is as lucky as lucky could be…

Apart from giving us possibly the worst Cockney accent ever in movie history, the otherwise wonderful Dick Van Dyke furnished those living in the 21st century with another falsehood about the history of the fire – that chimney-sweeping was a jolly old lark full of fun and games!

If only t’were true.

A fireplace that is used for the majority of the year, every year, or one which is used every day for years on end, needs to be swept regularly. The rosy-cheeked fellow who does this is the humble chimney-sweep.

Every time you light a fire in your fireplace, soot and ash is drawn up the chimney by the updraft of smoke. Over the course of years, this soot and ash builds up inside the chimney, forming black, crumbly deposits called creosote. Just like how grease in your kitchen drain prevents water from going down the pipes effectively, buildups of ash in the chimney prevents smoke from going UP the pipes effectively – in this case, your chimney-pipe, or flue.

For this reason, it’s necessary every now and then to get your chimney swept. By a sweep. With a broom and a brush.

Men of the Stepped Gables

If you’ve ever been to Europe, you may have seen buildings with rather odd-shaped rooves, such as this:

At the peak of the roof, you can see the chimney-stack with the pots on top. Sloping away on either side is the roof. See how it’s staggered down like a staircase?

Called crow-step gables, this roofing-style was popular from the Middle Ages up to the 1700s. Although it looks very pretty and geometric, it actually serves a practical purpose: It’s a built-in chimney-sweep staircase!

In an age when ladders rarely went right up to the roof, buildings were constructed with crow-stepped gables to give the poor chimney sweep somewhere to stand and climb in relative safety, as he made his way to the chimney-top to sweep down the ashes. And it was just as well, because chimney-sweeping was rife with dangers! Rather ironic then, that chimney-sweeps are supposed to be symbols of good luck!

Up until the late 19th century, chimney-sweeping was an extremely dangerous and even lethal profession. But not always for the reasons you might suspect. Laws in the United Kingdom and the United States had to be passed, and then strengthened, before the practice of shoving boys up chimneys was finally abolished in the 1870s.

Child Chimney Sweeps

“It’s a nasty trade!”

“Young boys have been smothered in chimneys before now”

“That’s a’cause they damped down the straw afore they lit it in the chimbly to make ’em come down agin! That’s all smoke, and no blaze; whereas smoke ain’t o’ no use at all in making a boy come down, for it only sends him to sleep, and that’s wot he likes. Boys is wery obstinit, and wery lazy, gen’lemen, and there’s nothink like a good hot blaze to make ’em come down with a run…It’s humane, too!”

– “Oliver Twist”, 1837

No write-up about chimneys and chimney-sweeping could possibly be complete without a part dedicated solely to the trials and tribulations of unfortunate apprentice-sweeps. Since the earliest days of chimney-sweeping, up until the last quarter of the 1800s, children were used to sweep chimneys. It was indeed a nasty trade, to say nothing of being extremely dangerous and lethal. But what made it so?

In England especially, but also in the United States, children, usually young boys between the ages of four and ten, were sent up chimneys with small brushes to sweep down the ashes inside the chimney-flues. It sounds harmless enough, but was actually phenomenally dangerous.

Imagine the following…

It’s 1830. You’re an orphan-boy, maybe six years old. You’re apprenticed to a Master Sweep. A typical assignment had you following your master to a well-to-do house somewhere in London, to sweep the chimney.

Now understand please, that it was NOT in most cases, the master sweep who did the sweeping – It was usually the job of his apprentice-boy to do that. The youth would be given a brush, and then he would literally have to crawl into the fireplace, and then climb up the chimney from the inside! In this dark, extremely cramped environment (usually less than 1ft square), the boy had to crawl up the chimney and stop every few inches to brush down the ash inside the flue, while the master sweep down below had the cushy job of sweeping the fallen ash into sacks to be removed from the building. In the most extreme of cases, boys were forced up chimney-flues which measured just NINE INCHES BY NINE INCHES! Measure that out with your ruler and see if you could get your son, or your nephew, or grandson, to squirm through a hole that size.

Now imagine a chimney-shaft 15 feet long, and getting him to crawl up that all the way to the top, and then crawl all the way back down again. Then imagine crawling up the chimney…and losing your footing…and falling two storeys down in the dark, and breaking your ankle on the hearth below. Or even worse, imagine getting your knees jammed up against your chest inside the pitch-black chimney, and being completely and utterly wedged into the chimney-pipe. You would choke on the ash, or die of asphyxiation from the smoke or from compression-injuries from the tight squeeze.

This did happen. And frequently. The ways to get boys out was either to drag them down with a rope, or to smash the chimney-flue open with a sledgehammer to break him out – before he either suffocated due to his cramped position, or choked to death on the falling ash.

Most chimneys were not large. Usually, one chimney was shared by two or three fireplaces, all stacked up on top of each other. So the bends, crooks and corners could very easily trap a child if he lost concentration, or panicked, and got himself wedged into the brickwork.

Young Master Oliver Twist was fortunate not become a “climbing boy” as chimney-sweep apprentices were called, and the British Government was genuinely concerned about the plight, and deaths of climbing-boys, but very little was ever done. The first act of parliament to try and regulate the chimney-sweeping trade was in 1788, but had little effect.

As early as the 1790s, longer, mechanical chimney-sweeping brushes had been invented, to try and replace climbing-boys, but due to the vast array of flue-types, the brushes were not always practical. Another act regulating chimney-sweeping came out in 1834, and another in 1840! But still the practice of sending boys up chimneys continued.

In the 1800s, the modern chimney-brush (still used by sweeps today, with big bushy brush-heads and segmented, screw-on handles) was invented. But its introduction was met with ignorance by chimney-sweeps. The new brushes were expensive and burdensome to carry around. It was much easier to pay a poor, starving peasant family, or a pauper family living in the East End of London ten shillings, or five shillings, to take their children away and make them climbing-boys.

Armed with scrapers and brushes, and usually stripped naked, these children were shoved up chimneys to clean them from the inside out. And not just for cleaning chimneys, but also to put out chimney-fires! Imagine being a 10-year-old waif, crawling up a chimney with a flaming hot blaze inside it, with a wet towel to extinguish it!

Although presented in a comical fashion, mocking the chimney sweep’s accent in his book, Dickens’ description of the working-conditions of climbing-boys was incredibly accurate, and some master sweeps really did light fires in fireplaces with the climbing-boys still up the flues! Unsurprisingly, some kids were literally roasted alive.

It was not until 1875, and the disaster attending a boy named George Brewster (aged 12) that sending boys up chimneys was finally outlawed in England! Poor George crawled up a chimney at the Fulbourn Hospital in Cambridgeshire, England.

Like so many hapless boys before him, he got hopelessly jammed in the flue. Sledgehammers and picks had to be brought out to smash the entire chimney down to get him out. He was dragged out alive, but died shortly after. The hospital staff were so appalled that they brought the incident to the attention of the police. George’s master sweep was given a sentence of six months’ hard labour on a charge of Manslaughter as a result.

George’s death in 1875 resulted in the passing of the Chimney Sweeper’s Act of 1875, which finally ended the practice of sending boys up chimneys.

Modern Chimney-Sweeping

After the 1875 abolition of child chimney-sweeps, sweeps had to rely on brushes to do their job for them. Or at least, in the United Kingdom. The practice of climbing boys continued in the United States, even after it had been abolished in England.

The standard chimney-sweeping brush has a round or square head, with stiff-bristles made out of wire for added abrasive action. The brush is fed up (or down) the chimney, and additional extension-rods are added to the brush to push it further up or down the chimney to scrape down the ash and soot.

These days, chimney-sweeps also use vacuum-cleaners and video-cameras to clean and inspect chimneys, but it remains a dirty, dusty job even today.

Like a Tinderbox

For centuries, the only way to light a fire was to do it the old-fashioned way – either through friction or concentrated sunlight. Eventually, mankind discovered that by striking certain materials together, sparks could be generated easily, and a fire could be started much more quickly.

To do this required three things: Flint, steel, and tinder.

Flint is a rock which can be easily chipped and fractured. When chipped to an angle, and struck or scraped down a piece of steel (such as a disc or a rod), sparks are generated by the friction, or the impact of stone and steel. These sparks, (shavings of steel, in fact), landing on a piece of tinder, would start a fire. Usually, flint and steel were kept together, along with a small, tightly-sealed container which held the tinder. This became known as a ‘tinderbox’. Tinderboxes had to be tightly sealed to keep the tinder as dry as possible so that it would catch fire instantly when sparks were showered upon it after flint and steel had been struck.

Even today, we have an expression about how something catches fire “like a tinderbox”, or how a potentially volatile situation is “like a tinderbox”, echoing the extremely combustible contents of these little metal boxes.

Striking a Light

For centuries, starting a fire was a fiddly, imprecise business. It was something which took skill and practice. Things improved when people realised that they could use steel and flint, but the absolutely best, idiot-proof way to light a fire came with striking matches.

Matches have a long history, and it goes all the way back to Ancient China. But modern striking matches, of the kind we purchase and use today, were invented in the 1800s. The first of this kind came out in 1816, and was invented by Frenchman Francois Derosne. Early matches were tipped with sulphur and white phosphorus.

These early French matches were fiddly to use and unpredictable. An improved version by Englishman John Walker, a chemist, was invented ten years later, in 1826, and is the basis of all matches we have today.

Walker’s early friction-matches were improved in 1829 by Scottish inventor Sir Isaac Holden (1807-1897), and were sold under the brand-name of ‘Lucifers’. Although they were an improvement, ‘Lucifer’ matches didn’t last, but the brand-name became a common nickname for matches during the 1800s and early 1900s, and matches were commonly referred to as ‘Lucifers’. The war song ‘Pack up your Troubles’ immortalised them with the line:

“So long as you’ve a Lucifer to light your fag, smile boys, that’s the style”

By the 1830s, more reliable friction-matches had been invented, these matches were stored in smart, silver or gold cases called vestas, which were commonly worn on pocketwatch chains and carried around with a gentleman, since one never knew when one might need a light. These vesta-cases often had corrugated striking-plates on the sides or bottom, so that a match could be retrieved and lit from the same container.

An antique silver vesta case. Note the striking-ridges on the bottom

An antique silver vesta case. Note the striking-ridges on the bottom

Matches continued to be phosphorus-tipped, strike-anywhere friction matches until the last decades of the 1800s. Although convenient in the fact that these matches could catch fire after being struck against any sufficiently rough surface (even the sole of your shoe!), their convenience came at the price of being a fire-hazard in that they could be too-easily ignited.

On top of that, white phosphorus matches were extremely poisonous. The unfortunate ‘match-girls’ who made these things, by dipping the matchsticks into phosphorus solution developed a crippling infection called ‘Phossy Jaw’. In essence, the phosphorus fumes seeped into the body and rotted out your jaw-bone, resulting in bone-infections, gum-infections, losing your teeth…eugh.

This was stopped in the later 1800s when white phosphorus was replaced with safer red phosphorus, which is still used today.

Starting in the mid-1800s, poisonous, dangerous, white-phosphorus friction-matches were gradually replaced by safer red phosphorus matches. These were less poisonous, and also much safer because instead of having the phosphorus and sulphur on the match-head at the same time, these matches only contained phosphorus, and the sulphur striking-compound was painted onto the sides of new cardboard matchboxes. Behold the modern safety-match!

The safety-match which we know today works because when you strike a match against a box, the sulphur and phosphorus combine, while at the same time creating friction, which is what causes the match-head to ignite. With the two components of a burning match now separated from each other, it is impossible for a friction match to be lit purely by being struck against an abrasive surface. This made them safer to handle and store than traditional strike-anywhere matches.

Mankind Roasting on an Open Fire

For centuries, heating, lighting and cooking was done with an open flame and fire, using candles, lamps and fireplaces. The Industrial Revolution of the 1700s saw the first practical iron stoves being built in Europe. Made of cast iron, these stoves were able for the first time to allow people to do more of their cooking at home.

Previously, cooking on an open fire was fiddly and tricky – You were limited by what you could hang over the flames or sit on the hearth. The first stoves allowed mankind to fry, bake, steam, boil and roast a much greater variety of foods than a simple open fire would have permitted. This greater control of fire vastly improved home comfort.

Prior to the invention of the cast iron range stove, baking was a specialty art. The only people who could bake were the people who had ovens. And ovens were huge brick and stone structures which were expensive to build and took up a lot of precious space. Not everyone had them, and most people didn’t. To bake your pies, cakes and loaves, you had to take them to the village bake-house to be baked.

With the stove, it was now possible to bake at home! And with a much better fuel, too.

It was at this time that people started switching from wood as a fuel-source to coal, instead. Coal had advantages, but also disadvantages. Coal burns hotter than wood, and so produces much more heat for the same amount of fuel. The problem is that coal burns and produces nasty black smoke! Eugh!

Wood-smoke is lovely. Everyone loves wood-smoke. It smells wonderful. People have smoked meat, cheese, fish and all other sorts of things in wood-smoke for centuries. It preserves the food and gives it a lovely flavour! Yum! But mixing coal-smoke with your food was apt to put you off your appetite, and to prevent this, coal-burning stoves and fireplaces did everything to channel the smoke away from the rest of the house.

Fires in the 21st Century

In the Developed World, the wood or coal-fueled fire is no-longer the primary source of heat or light anymore. Most of us cook on gas or electric stoves and heat our homes with heaters or central heating or split-system air-conditioners. But in other places around the world, fires continue to burn bright. But what should you do if you want to get a fire going?

Using your Fireplace

Perhaps you live in an older house with a fireplace and you would like to start using it to warm the house in winter? What to do, what to do, what to do??

The first thing to do is to ensure that your fireplace is a working fireplace. By this, I mean that all the fittings are functional and undamaged. The chimney should be clear and undamaged, and the damper should open and close smoothly. If you are unsure about the condition of your chimney, then you should have it checked by a professional chimney-sweep. Or you can do it yourself – All you need is a ladder, a flue-brush (and extension-rods) a few drop-sheets and a vacuum cleaner (or a shovel and bucket).

Whenever a chimney is swept, you’re scraping out all the soot and ash which has caked onto the inside of the chimney. It’s called creosote. Here’s a picture:

Scraping this crap out of your chimney-pipe ensures that the air moves smoothly up the flue and that the smoke has an unimpeded passage to the outside world.

To prepare the fireplace, you need to ensure that you have all the right bits and pieces. The necessary bits and pieces are listed and illustrated earlier on in this posting.

Lighting a Fire…

There are a dozen methods for building and lighting a fire. Here are just two methods, and the bare essentials.

To light a fire, you will need a source of ignition – matches, a cigarette lighter, or flint and steel if you want to do it the old-school way.

You will also need tinder. Tinder is anything small, dry and shriveled. Grass. Straw. Shredded, scrunched or twisted paper. Old cloth. Tinder goes first, at the bottom of the fireplace grate.

On top of the tinder, you set up your kindling. Kindling is any small dry pieces of wood. Usually old branches or larger pieces of wood split into smaller pieces. Kindling should be small enough that you can grab a whole bundle of it in one hand. If you can’t, it’s probably too big.

Light the tinder and wait until the kindling is going. Once it is, you can lay on your pieces of fuel-wood. Start with smaller pieces and work your way up to progressively larger pieces.

Waiting for the kindling to light before going further is important. It allows the fire to get a foothold. But it also allows your chimney a chance to warm up. You can’t light a fire in a cold fireplace (trust me, I’ve tried. It doesn’t work). Letting the kindling burn for a bit sends hot air up the chimney. This drives out or warms up any cold air in the chimney, and establishes an updraft – a current of air that draws more air into the fireplace below, which stimulates the fire and encourages it to burn more intensely.

With this going, add on your fuel-wood in increasingly larger segments and logs. You have a fire!

As always, keep an eye on your fire. And if you’re not going to, then make sure that the safety-screen is across the fireplace to prevent accidents – Rolling logs do happen, and you don’t want to come back to your living-room to find one burning a hole in your carpet. You might want to keep a small bucket of water or a fire-extinguisher nearby, in case the unforeseen should occur.

Fire-Building Methods

The two most common fire-building methods are the Upside Down Fire, and the Tepee Fire.

The Tepee Fire works on the age old rule that fire always burns UPWARDS. So any extra fuel should be placed above and outwards, from the fire’s point of origin. You put your tinder in a little pile in the middle of the fireplace, then lean kindling sticks against it, like an American Indian tent, or ‘tepee’. Then lay fuelwood around it in the same manner with a little door open at one side, to stick a match into it to light the kindling.

The other fire-building method which has gained a lot of popularity is the Upside Down Fire.

While the Tepee fire works best with almost any size of wood, the Upside Down Fire works best with smaller, thinner pieces of wood. It’s built in the following method:

Get your fuel-logs and stack them in a criss-cross pattern, building up a tower of wood. At the top, build your fire-tepee with tinder and kindling, and a small amount of fuelwood. Then light the fire at the top.

The reason it’s called an UPSIDE DOWN fire should now be apparent – It goes AGAINST the rule that fire burns from the bottom up. The Upside Down Fire works in that the flaming materials burn DOWNWARDS through the tower of fuel-wood. As it does so, any unburned portions of the tower collapse inwards, further fueling the fire, until it reaches the very bottom, and burns out. Upside Down fires are meant to be maintenance free – Build it, light it, forget about it. Ideal for camping. Or lazy people.

Both methods work. It’s just a matter of which one is best for you in your situation.

A Steinway ‘Victory Vertical’ piano, sourced from pianoworld.com

A Steinway ‘Victory Vertical’ piano, sourced from pianoworld.com Dr. Eduard Bloch in his medical office in Austria, 1938. Two years before he fled to America with his family

Dr. Eduard Bloch in his medical office in Austria, 1938. Two years before he fled to America with his family

My fireplace in winter.

My fireplace in winter.

Brass andirons in a fireplace

Brass andirons in a fireplace Andirons at work, supporting a stack of burning firewood

Andirons at work, supporting a stack of burning firewood The grate in my fireplace

The grate in my fireplace.jpg) A brass fireplace fender. Fenders are freestanding, and they can be moved to more easily clean the fireplace between uses

A brass fireplace fender. Fenders are freestanding, and they can be moved to more easily clean the fireplace between uses

Antique cast iron fireback

Antique cast iron fireback The reflector placed behind the grate in our fireplace. A homemade affair easily fashioned out of sheet-metal, a few screws and some metal bars

The reflector placed behind the grate in our fireplace. A homemade affair easily fashioned out of sheet-metal, a few screws and some metal bars The Great Fire of London Screen!

The Great Fire of London Screen! Chim-chimney-chim-chimney-chim-chim-cheree,

Chim-chimney-chim-chimney-chim-chim-cheree,

An antique silver vesta case. Note the striking-ridges on the bottom

An antique silver vesta case. Note the striking-ridges on the bottom

Posters like this one from 1942 are believed to exaggerate the Japanese threat to Australia. However, they were probably closer to the truth than most people knew, or were willing to admit

Posters like this one from 1942 are believed to exaggerate the Japanese threat to Australia. However, they were probably closer to the truth than most people knew, or were willing to admit

.svg/250px-Union_flag_1606_(Kings_Colors).svg.png) The flag of the Kingdom of Great Britain. The red St. George’s Cross with the white background, over the white St. Andrew’s Cross and blue background, of Scotland. This would remain the flag for nearly 100 years, until the addition of Ireland

The flag of the Kingdom of Great Britain. The red St. George’s Cross with the white background, over the white St. Andrew’s Cross and blue background, of Scotland. This would remain the flag for nearly 100 years, until the addition of Ireland A map of the world in 1897.

A map of the world in 1897. The extent of the British Empire by 1937. Again, anything marked in pink is a colony, dominion, or protectorate of the British Empire

The extent of the British Empire by 1937. Again, anything marked in pink is a colony, dominion, or protectorate of the British Empire An imperial war without the Empire…

An imperial war without the Empire…